Profiling TPU Kernels - XProf, HLO, and the Roofline Model

Deep-Dive into TPU Profiling — XProf, HLO, and the Roofline Model Part 2 of the...

The Ratchet Loop — Systematically Optimizing a TPU Kernel to the Hardware Ceiling Part 3 of the KernelForge series on writing, profiling, and optimizing custom TPU kernels in Python. Part...

./read_more.shExplore my thoughts on Machine Learning, AI, and more

Deep-Dive into TPU Profiling — XProf, HLO, and the Roofline Model Part 2 of the...

Writing Fast Attention on TPU — From Naive Kernel to Fused FlashAttention with Pallas Part...

Running Containerised Batch Jobs on Google Cloud Platform I needed to OCR tens of thousands...

In today’s data-driven world, video content is everywhere—from security surveillance and industrial monitoring to social...

Large Language Models (LLMs) are powerful predictors. Given a sequence of text, they excel at...

Speech synthesize has become more human like within last few years and gained a higher...

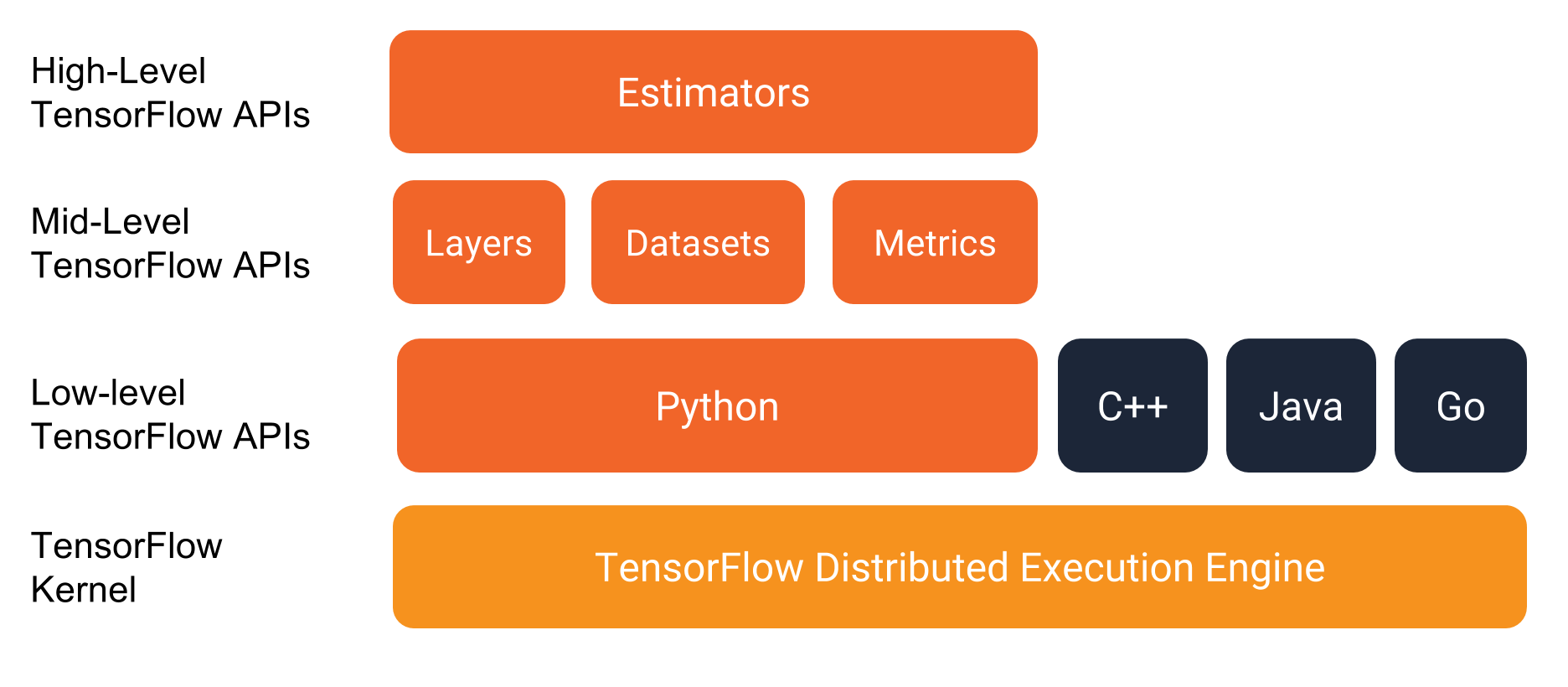

In a previous post, we discussed about Tensorflow graphs and sessions. Since building a computation...